- Blog

- Easy imei changer v1-01

- Kelk 2010 patcher v2-2

- Drivers dell mih61r motherboard

- Fnaf 1 free download

- Reversible crochet afghan patterns free

- How to run mdickie games in windowed mode

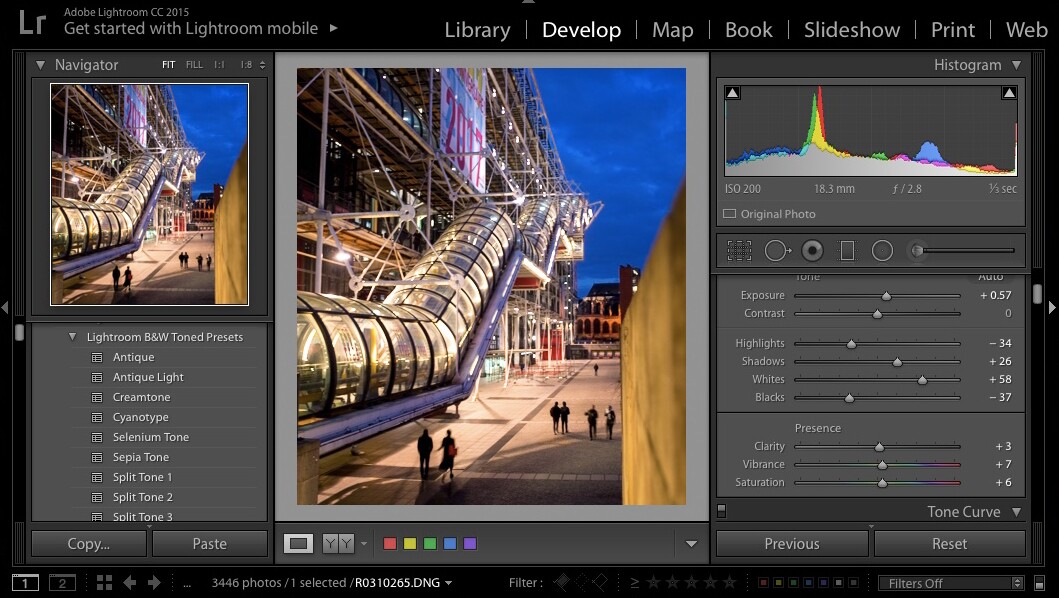

- How to install lightroom pirate

- What does svp mean

- Capturing reality raw files

- Mobil vega conflict hack

- Grizzly bear shields songs

- Can ab powerflex 525 connect to siemens simatic s7-1200

- #Capturing reality raw files for free

- #Capturing reality raw files movie

- #Capturing reality raw files software

#Capturing reality raw files software

Custom capture software, custom built recording hardware, storage configurations, software storage, custom cloud processing farm, network solutions. Everything had to be built and designed from the ground up. We had to self fund all this research, without VC backing. This all costs money and this is why it has taken us so long to proceed. Only up until the last 6 months was this really possible at the higher end. We needed access to the RAW streamed data at a higher bit depth, no rolling shutter, higher resolution and synced.

#Capturing reality raw files movie

wav in After Effects), they suffer from rolling shutter artefacts and they can only output heavily compressed movie files. We could have continued with this research but the problem with these cameras is their resolution is too low, they cannot be synchronised (hand synced by sound.

These were our first tests using standard DSLR cameras set to HD. Some of our early primitive 4D DSLR research dating back to 2011 So we reversed that and we’ve designed and built our own “OutsideIn” 4D Motion Scanning System, part IR, part IDA-Tronic in design. Here’s a typical example of an “InsideOut” Lightfield capture system, similar to what Facebook showcased recently Motion scanning, 4D, volumetric capture, holographic, video scanning, videogrammetry, lightfields. Terms that have appeared over the last few years in relation to this field. Our 6K cameras in resolution comparison to 2K we dream of using USB point grey cameras at 2K resolution. It’s also been expensive to develop on our own. This has been a huge technical challenge, something that has taken us over 5 years to solve since our early days in human scanning 10 years ago. Higher frame rates will reduce capture resolution. Our base line capture rate is 30fps but we can also shoot at 60fps or 120fps depending on ROI used. We use cameras that can record RAW footage (at up to 12-Bit) at 6K resolution, streamed to disk at up to 3.4GB/s. High-end, high resolution per pixel reconstruction using machine vision and modern software tools like Agisoft Photoscan or Capturing Reality. We came at this problem from the hardest possible angle. Our system is a little different to the others as it does not rely on green screen silhouette reconstruction, no active scanning, no stereo pairs or low end USB cameras.

#Capturing reality raw files for free

4DViews really paved the way for free view point media. Or using other techniques like green screen silhouette reconstruction by the visionaries at 4DViews and Microsoft Research. Not forgetting the incredible high fidelity research by Disney Research Zürich

It’s since spawned many similar variations whether it be the incredible work by Oliver Bao and team at Depth Analysis (L.A Noire), or the pioneering stereo pair approach by Colin Urquhart and team at Dimensional Imaging (DI4D), or active IR pattern scanning like 3DMD or the phosphorescent paint approach by the now troubled MOVA Contour. George Borshukov’s work was a catalyst of inspiration for us for many years. Most notably in the Harry Potter films using a custom built rig by MPC and more recently by Digital Air in Ghost in the Shell. It’s since been used in various forms in VFX and games. It was used extensively by George Borshukov and team on The Matrix films. The most popular usage of Videogrammetry dates back to the late 90’s. Trying to understand the capture, storage and playback obstacles. The word aeon /ˈiːɒn/, also spelled eon (in American English) and æon, originally meant “life”, “vital force” or “being”, “generation” or “a period of time”, though it tended to be translated as “age” in the sense of “ages”, “forever”, “timeless” or “for eternity”.Ĭarrying on from our 4D scanning research back in 2011, we’ve been working on finalizing our “OutsideIn” 4D motion scanning system. Something that has taken us over 5 years to develop from inception to reality. Working tirelessly on our new AEON motion scanning system. We’ve been in stealth mode for the last 18 months.